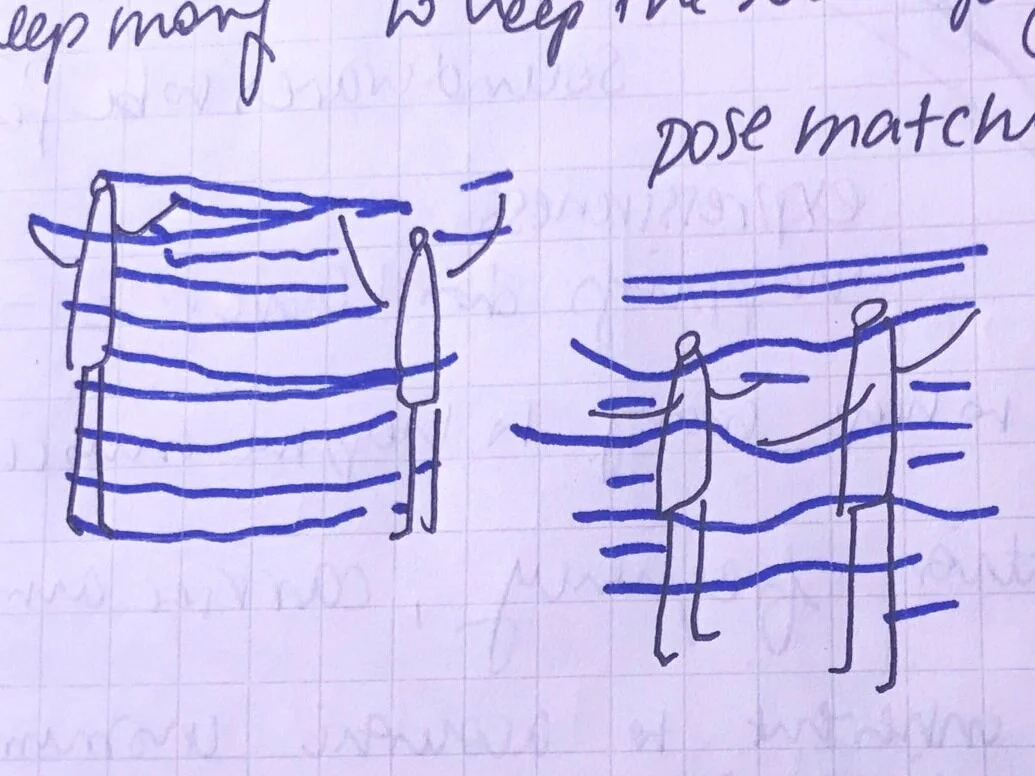

In my first user scenario I am thinking about tying the synchronization of the movements of two people together with the phrasing of the sounds. In other words, if the movements are out of sync, the phrases of the rhythms would be shifted by a certain amount. The more out of sync, the more shifted.

The second user scenario is the resulting rhythm. If there is a lot of changing movement between the two people, there would be more instruments added to the overall sound. The less movement, the more bare.

some thought exercises about sound, space and movement:

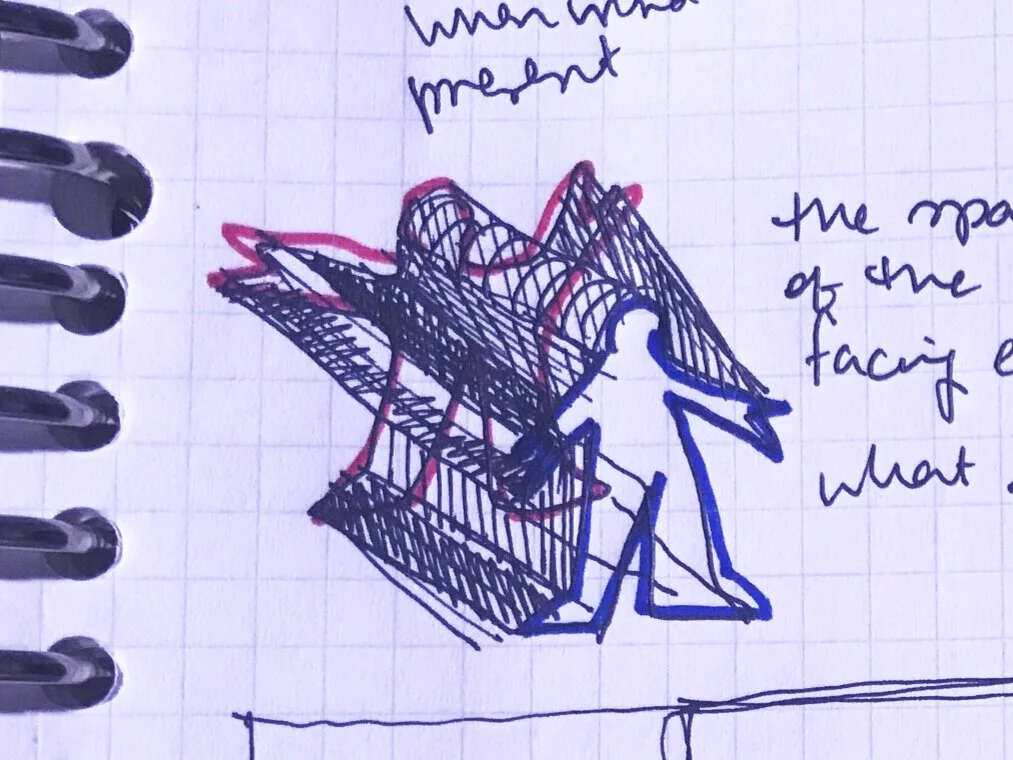

what if we imagine that the imaginary ether flowing past us is silent sound that can only heard when we agitate it by moving our bodies and disrupt the flow

what would the resulting volume between two people look like?

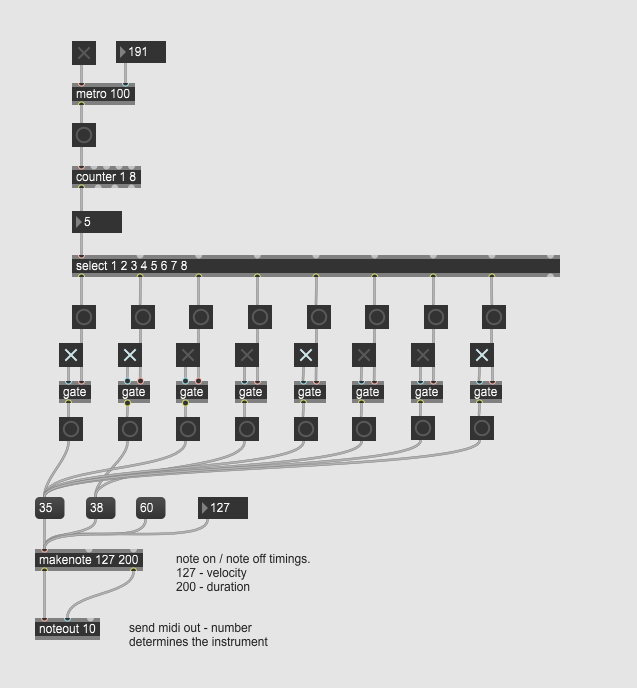

While I have these ideas in mind. I am still in the midst of figuring out Max MSP. I think Max would be a really useful tool for this project because its dynamically configurable. However, as of yet, I have not been able to implement the scenarios that I have in mind. So far I have a step sequencer patch set up that can trigger different MIDI sounds in a controlled beat grid. I am working towards figuring out how to use this for layering different sounds and for phase shifting.