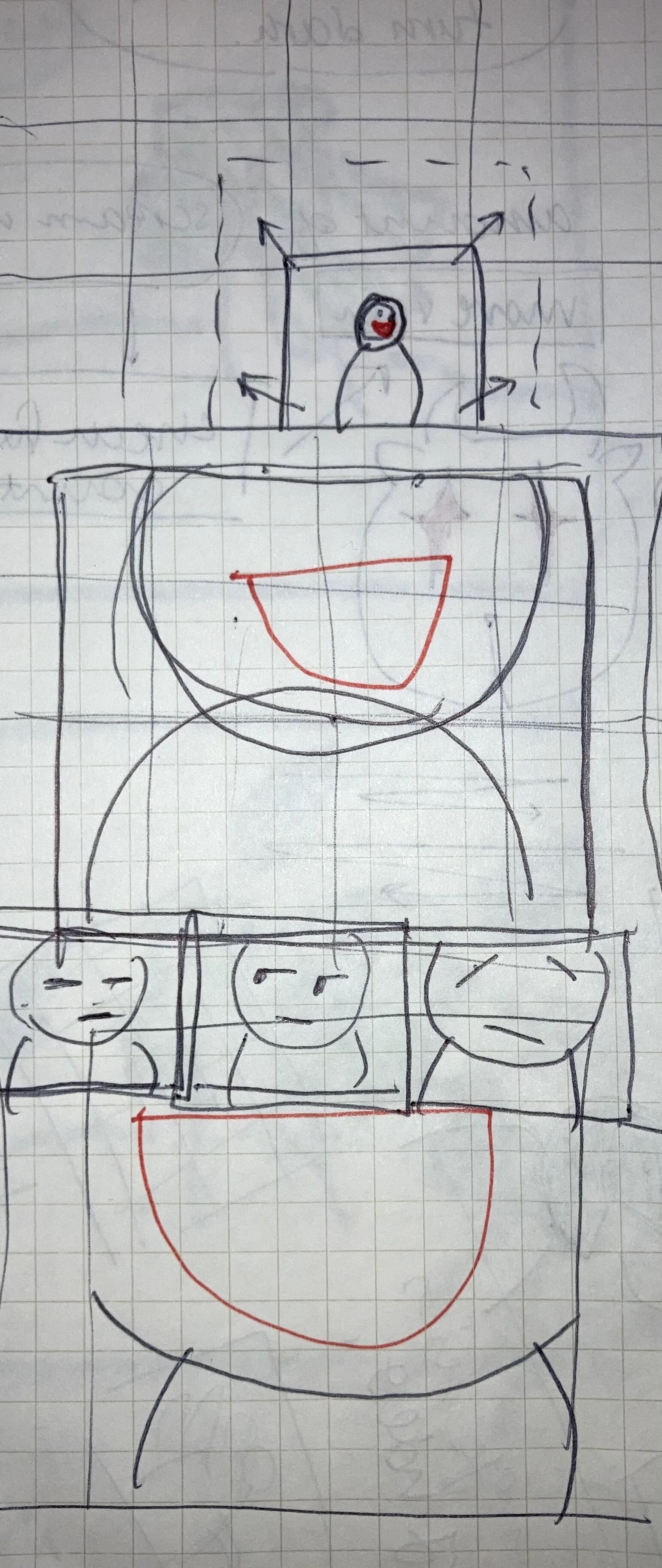

For the final project, Aditya and I managed to create a web site that mimics zoom with video/voice streams but this time with consequences.

The idea is that the longer someone talks, the bigger their video gets, physically taking up more space. This design feature is a jab at the standard and static video-conference call software we are stuck with while we wait out the pandemic. So while the scaling worked, one of the other features which was to make the voice of the person talking go higher pitch the longer they talked did not because of complications with web-audio with peer2peer object management. I will definitely try again even though it did not work in time for the presentations.

The code was adapted from my mid-term project that had the screaming portal space. The same algorithm (referenced from this) that analyzed the amplitude of each voice channel to gauge how loud people were screaming was used to gauge how long people passed the threshold to determine how long they have been talking.